Daesin Logistics Dispatch Bot

2026-01-01 — present

Logistics dispatch data service crawling with Cheerio, queryable via KakaoTalk chatbot skill server and Next.js mobile web — Clean Architecture + TSyringe DI, Express 5, Prisma SQLite, Traefik Blue-Green zero-downtime deployment

System Architecture

Problem Solving

Operational inefficiency from manually checking dispatch status on website

Crawled EUC-KR encoded dispatch data with Cheerio + Axios, node-cron auto-sync Mon-Sat 06:00-20:00 hourly

Manual lookup → 15 daily auto-crawls, real-time data availability

Field workers had no mobile access to dispatch info without PC

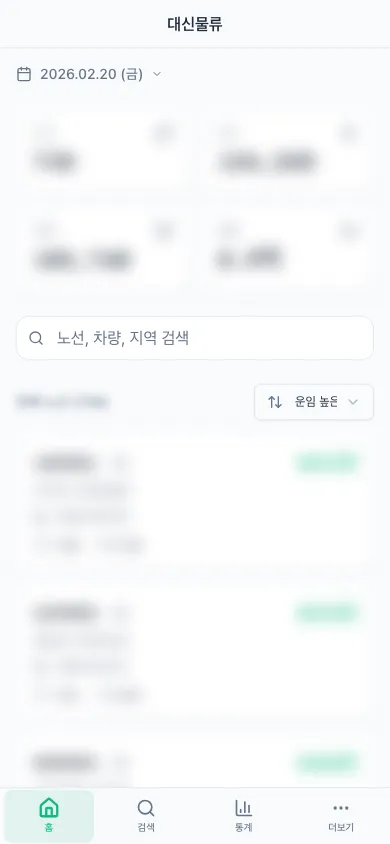

Implemented Kakao i Open Builder skill server + Next.js responsive mobile web + Recharts stats dashboard

Dual-channel (KakaoTalk + mobile web) enabling instant field queries

Business logic changes required when external dependencies changed

Clean Architecture layer separation, TSyringe DI token interface binding, Value Object pattern for type-level domain rule enforcement

Only infra layer changes on dependency swap, zero business logic changes

Service downtime from manual restart deployment

Traefik file-provider Blue-Green deployment, deploy.sh automating build → health check → YAML rewrite → traffic switch

Zero deploy downtime, security without Docker socket exposure

Project Description

A service that automatically collects logistics dispatch data for real-time querying via KakaoTalk chatbot and mobile web. The backend separates domain, application, and infrastructure layers using Clean Architecture with TSyringe-based dependency injection, crawling dispatch data with Cheerio + Axios and storing in Prisma SQLite. Auto-syncs via node-cron every hour from 6 AM to 8 PM (Mon-Sat), and provides route code, vehicle number, and destination search with daily statistics through a Kakao i Open Builder skill server. The frontend is a Next.js mobile web with TanStack Query-based data fetching and Recharts statistics visualization. Designed a Blue-Green zero-downtime deployment architecture using Traefik reverse proxy in Docker, automating the full cycle from build to health check to traffic switching to rollback via deployment scripts.

Highlights

- Clean Architecture + TSyringe DI layer separation design

- Cheerio crawling + node-cron hourly auto-sync (Mon-Sat)

- KakaoTalk chatbot skill server (route/vehicle/destination search)

- Traefik Blue-Green zero-downtime deploy + automated deploy scripts

- Next.js mobile web + Recharts statistics dashboard

Performance Metrics

| Performance Metrics | Before | After |

|---|---|---|

| Crawl frequency | 수동 조회 | 일 15회 자동 (자동화) |

| Deploy downtime | 수동 재시작 | 0초 (Blue-Green) |

Tech Decisions

- ▶ Clean Architecture + TSyringe DI: layer separation for swappable external dependencies (crawler, Kakao API, DB), interface binding via DI tokens

- ▶ SQLite: eliminates PostgreSQL operational overhead for single-server read-heavy workload, file-based volume mount ensures Docker portability

- ▶ Traefik file provider: traffic switching via YAML rewrite without Docker socket exposure, achieving both security and simple rollback

Lessons Learned

- • Learned decoupling business logic from external dependencies (crawler, DB, Kakao API) by applying Clean Architecture layer separation with TSyringe DI container

- • Understood chatbot platform request/response protocols and scenario block integration by directly implementing Kakao i Open Builder skill server

- • Experienced zero-downtime deployment traffic switching, health checking, and rollback strategies by designing Traefik-based Blue-Green deployment with automated deploy scripts

- • Learned domain modeling techniques preventing primitive type abuse by enforcing domain rules at type level through Value Object pattern (LineCode, SearchDate)